Reap In Maximum Profits with the Best E-Commerce Website

One of the best businesses that you can start with very low investment is an eCommerce business. Many people have earned a lot of revenue in this business. With more and more people preferring to buy their products online, there is much scope for eCommerce business anywhere in the world and Singapore is no different that way. Many products can be sold through an eCommerce website. If you can invest a lot of money you can start your own manufacturing and sell online through these websites. But you can also sell others’ products through the eCommerce site. The main requirement for a successful business is a perfect ecommerce website design.

Understanding the Expectations from an E-Commerce Website

The best eCommerce website design will include features that will help both the customers and the sellers to comfortably operate the website and complete their tasks. The customers must be able to easily browse through the site and complete their purchases. People expect the purchase experience to be quick and smooth. The major reason for people abandoning their carts in the online purchase is because the path from selecting the product to checking out is not smooth. Many times, they have to go back and forth before completing the purchase process.

Good eCommerce website design ideas will include features that will help customers to easily find what they want. Easy search filters are a necessity in any site. Customers must be able to find their needs by typing just a couple of letters. They should be led quickly to the section that will have their products. They must also be able to see other products related to what they are searching for. This will help them in buying related things easily and also increase the business for your site.

Good Content Is Essential For Increasing Sales

People are not satisfied just with seeing one or two photos and the price. People like to see the product in detail. It is also essential for them to see how it is used and what are its features. This will mean that the eCommerce website development should facilitate uploading various photos and videos of the products. Customers also are interested in seeing the photos of actual customers using the product. Making it possible for uploading Instagram photos is an essential feature.

Products and prices keep changing. New products and new features in existing products must be updated immediately. Such changes must be incorporated quickly so that you can take advantage of these and get increased sales. For this, the site owner must be able to add, delete or change the content easily without calling for help from the developers every time. The eCommerce website development process must include the best content management system that will allow the site owner to alter content easily.

There is no doubt that the site must be mobile-friendly. Today more people like to use their smartphones to make all their purchases. Your site must give the same experience on the mobile devices as it does on the desktop. You must have a responsive website that will function the same way in any device. The pages must load fast and the images and videos must be as attractive as in the desktop site.

Order Processing and Inventory Management

It is not just the customers that need ease of operating the site. The site owners must also find it easy to operate the site. Many functions need to be done very quickly on the eCommerce site. One of the most important features is processing the order. All the orders received from different customers or other eCommerce sites must be processed properly and in order of receipt. Nobody should complain that their order was delayed or incomplete. For this, the site needs excellent order processing features.

The web development company must also ensure that it is easy to manage the inventory on the eCommerce site. Proper inventory management will help to ensure that products don’t go out of stock. There should be an automatic ordering facility when the product reaches a low stock level. The website must ensure that products that not in stock don’t show so that customers are not disappointed by not receiving the item.

Intelligent Reporting and Security

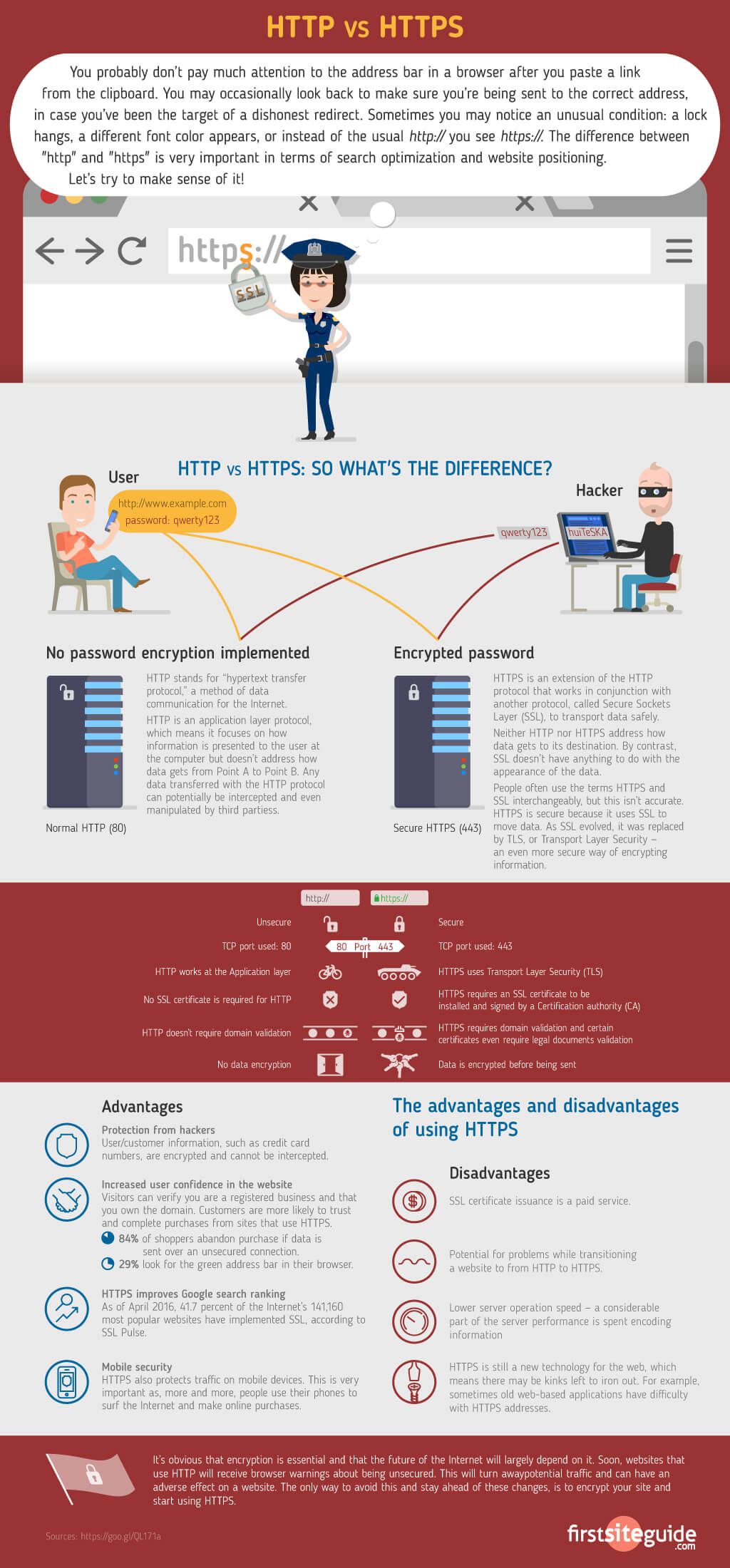

Every business needs reports for taking it forward correctly. The website must provide for any information with just a click. Daily, weekly or monthly sales must be easily accessible. It should generate reports of inventory. Sales analysis is very important to plan future marketing campaigns. High security should be provided to prevent leakage of customer or payment details. Customers should feel confident about buying products on the site. The site must have secure payment methods.

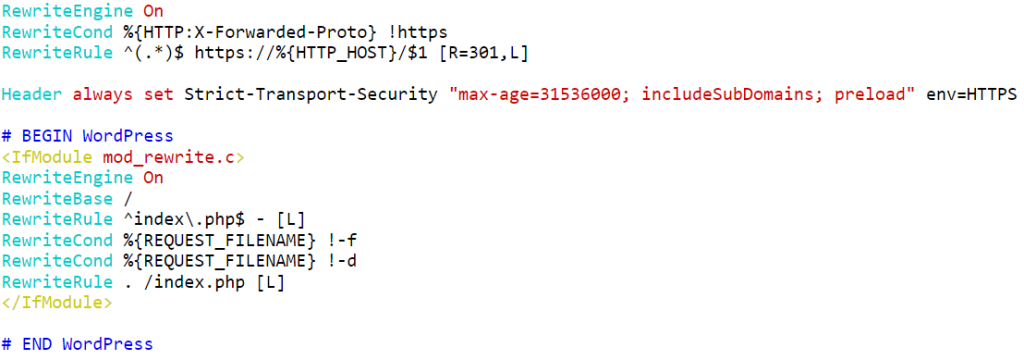

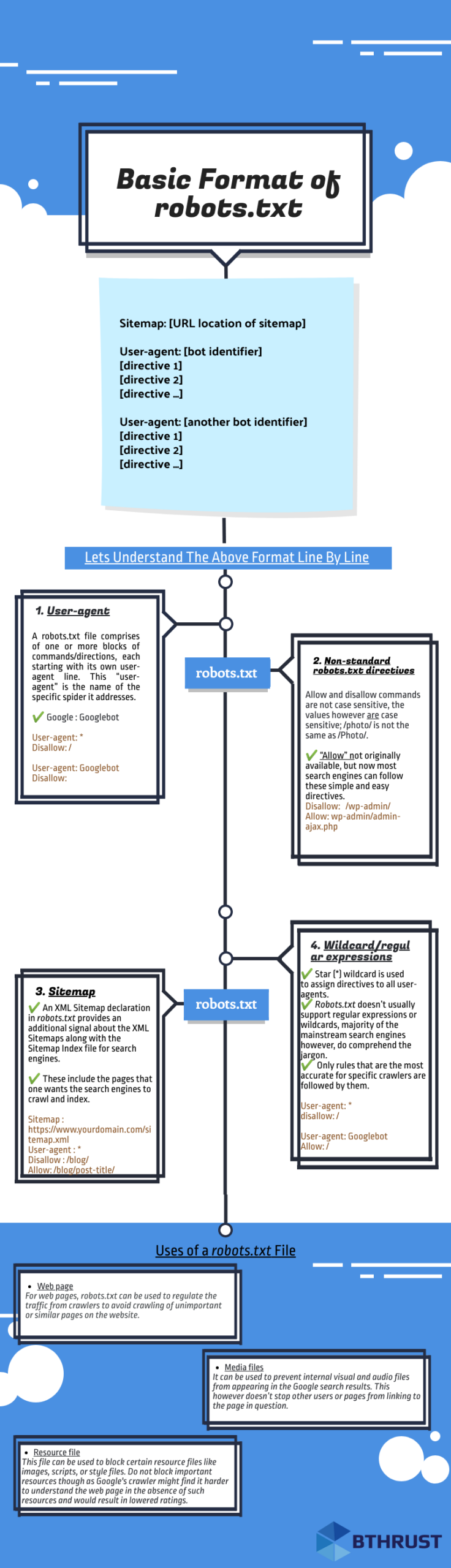

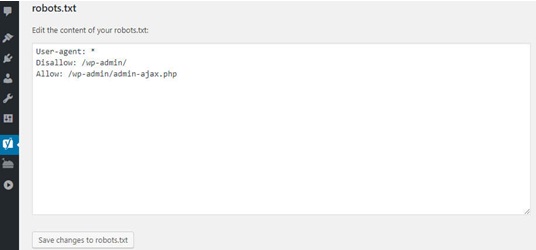

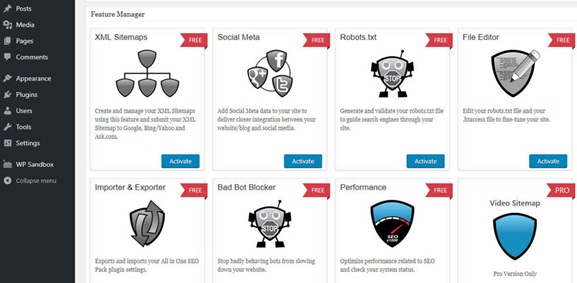

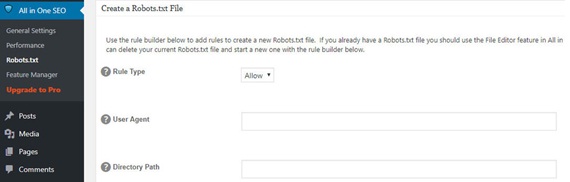

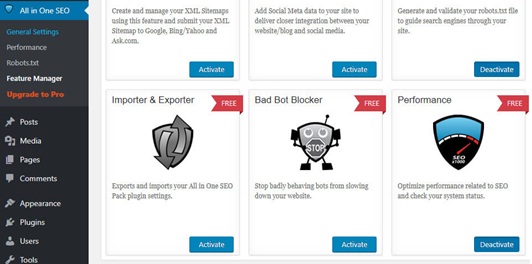

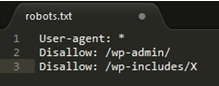

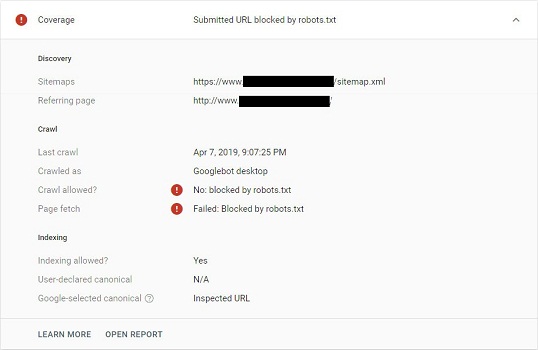

A robots.txt file aka robots exclusion protocol or standard, is a tiny text file, which exists in every website. Designed to work with search engines, it’s been moulded into a

A robots.txt file aka robots exclusion protocol or standard, is a tiny text file, which exists in every website. Designed to work with search engines, it’s been moulded into a

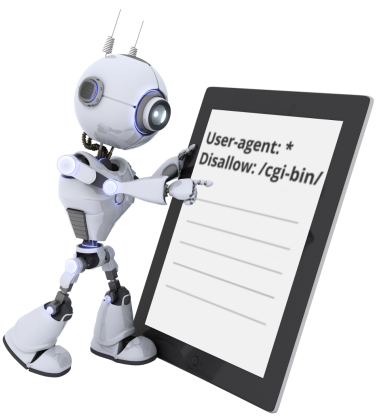

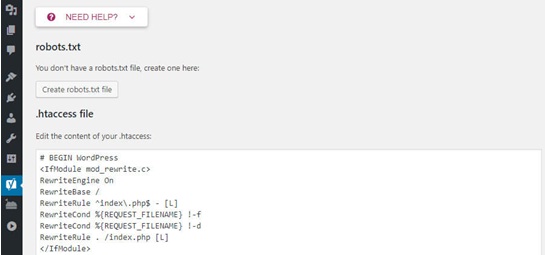

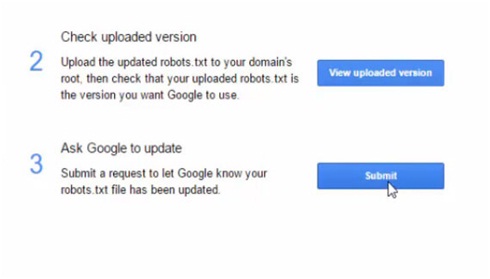

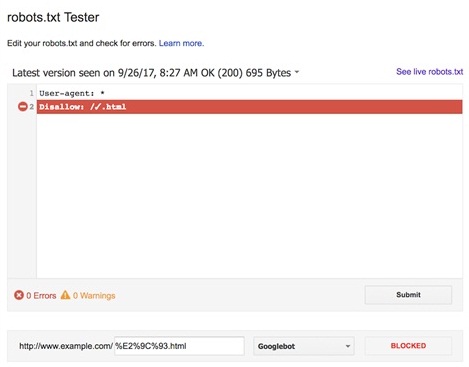

4) The platform checks the file for any technical errors and in case of any; they will be pointed out for you.

4) The platform checks the file for any technical errors and in case of any; they will be pointed out for you.

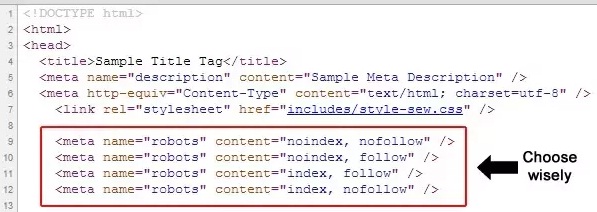

Meta robot tag provides extra functions which are very page specific in nature and can’t be implemented into a robots.txt file; robots.txt lets us control the crawling of web pages and resources by search engines. On the other hand, Meta robots lets us control the indexing of pages and crawling of link on the page. Meta tags are the most efficient when being used to disallow singular files or pages whereas robots.txt files work to its optimum capacity when being used to disallow sections of sites.

Meta robot tag provides extra functions which are very page specific in nature and can’t be implemented into a robots.txt file; robots.txt lets us control the crawling of web pages and resources by search engines. On the other hand, Meta robots lets us control the indexing of pages and crawling of link on the page. Meta tags are the most efficient when being used to disallow singular files or pages whereas robots.txt files work to its optimum capacity when being used to disallow sections of sites.