Over the past two years, the whole SEO field accomplished a huge change. Therefore, numerous e-commerce marketers have impressively altered their tactics. It is not as easy to rank competitive keywords high in Google as it was three years ago.

As black hat SEO has got so harder to carry out and got so down to produce results, a new kind of SEO has come up with the name, “negative SEO”.

This article will assist you to perceive the actual negative SEO and how to safeguard your online business from this attack. If you are planning to build your online website and keep it safe, do follow what is acquired in this article.

What is Negative SEO?

Negative SEO is defined as the procedure of making the use of a black hat and immoral methods to destroy the rankings of your competitors in search engines. There are different kinds of Negative SEO attacks which are as follows:

● Website hacking

● Creating more than hundreds of spam links to your website

● Duplicating your content and dispensing it all over the web

● Focusing links to your website with the use of keywords such as poker online, Viagra, and many more

● Forming fake social profiles and destroying your online reputation

● Detaching your website’s best backlinks

Is Negative SEO a Real Threat?

Obviously, with no doubt, Negative SEO is 100% real and various websites have to manage with this issue. It is better to prevent it rather than fix it.

More than 15,000 people desire to do the task of “Negative SEO” on Fiverr just for $5

In addition to this, people have said a lot about their successful techniques in the black hat forum.

The Disavow Tool has been released by Google to assist webmasters to manage with this issue, but the utilization of the tool should be done with utmost care and only as a last measure.

Take a look on Matt Cutts’s answer about negative SEO:

Usually, the tool will take 2-4 weeks to work. What if your website is penalized for one month? Can you bear it? It is not possible for anyone. I will direct the ways to you on how to prevent these attacks and make your business secure.

How to Prevent Negative SEO Attacks

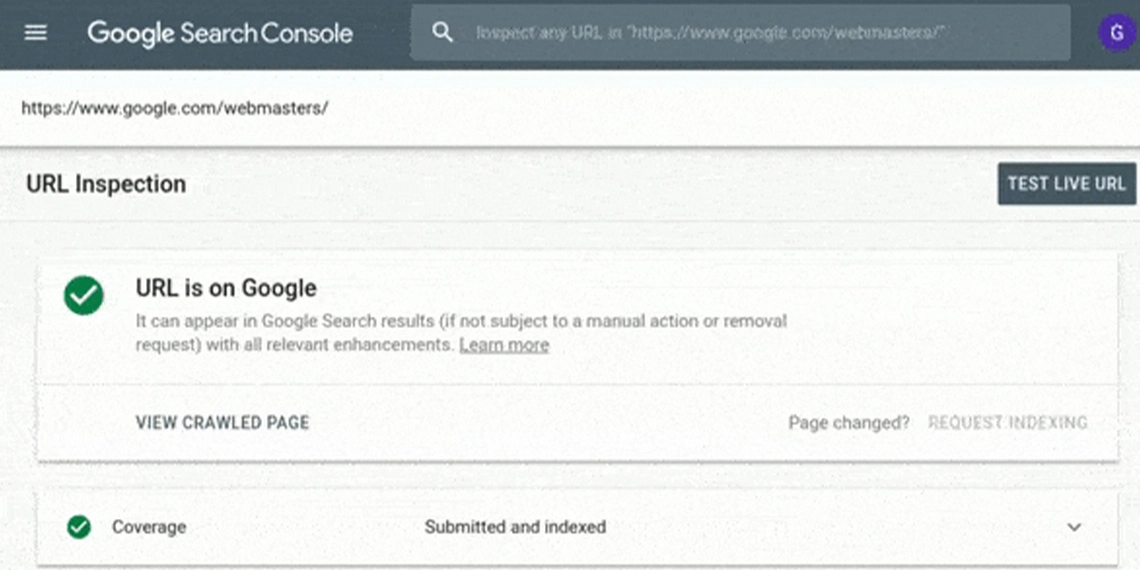

1. Set up Google Webmaster Tools Email Alerts

● Google can notify you when:

● Malware attack your website

● Your pages are not marked

● Server connectivity issues arise

● You acquire a manual penance from Google

● if you have not already connected your website to the tools of Google Webmaster

● Sign in to your account and press “Webmaster Tools Preferences.”

Allow email notifications and select to get alerts for all kinds of problems. Press “Save.”

This is the initial step. Now, let’s shift to the significant one, monitoring your backlinks profile.

2. Keep Track of Your Backlinks Profile

This is the most vital step to take to stop spammers from succeeding. Often times, they will execute negative SEO as opposed to your website by creating poor redirects or links. It is necessary to get aware of someone building redirects or links to your website.

The tools like Open Site Explorer or Ahrefs can be utilized from time to time to check manually whether someone is creating links to your website, but I would suggest you use MonitorBacklinks.com. It is one of the easiest and best tools which can send email notifications when your website obtains or suffer the loss of essential backlinks.

Monitor Backlinks will send all the things you require to your inbox instead of getting your website to check manually. Here is the way how you can utilize it:

As you will create your account, you will need to append your domain and join it with your account of Google Analytics.

Even if it exhibits your backlinks instantly however there is a chance that it may take a few minutes. Your settings are placed to send you email alerts by default when your website gets new backlinks. An email notification will look like this:

3. Protect Your Best Backlinks

Spammers will strive to detach your good backlinks very frequently. By using your name, they will usually connect the website owner of the link and also, they will ask the webmaster to detach your backlink.

You can do two things to get away from this happening:

● Instead of using Yahoo or Gmail, always use an email address from your domain to communicate with webmasters. In this way, you can prove that you are the one who is working for the website and not someone else. The form of your email should be like this: yourname@yourdomain.com.

The time when you come in contact with webmasters, always

● Maintain a record of your best backlinks. And to do this, it is possible that you can observe backlinks again. Check your list of backlinks and classify them according to social activity and page rank.

Tag the backlinks the one which you prefer the most hence verification can be done if any of them are removed.

Choose your backlink and press “edit.”

In order to filter later and get the backlinks easily, add your tag.

As you complete with the creation of your list, filter them on the basis of your tags, and classify if their status alters. You should contact the webmaster if any of these links are removed and also ask them why they detached your link.

4. Secure Your Website from Malware and Hackers

Safety is very essential. The very last thing you require is spam on your site even without you are not having aware of it. Many ways have existed that you can do to protect your website :

● Install the Google Authenticator Plugin in case if you are using WordPress and form a two-step verification password. The time when you sign in to your WordPress website, you will be asked to append a code created by Google Authenticator on your iOS or Android version Smartphone as it is available in these versions only.

● A strong password should be created filled with special characters and numbers.

● Backups and database of your files should be created on a regular basis.

● If your website is allowing users to upload files, communicate to your hosting firm and ask them for the solution on how antivirus can be installed to avoid malware.

5. Check for Duplicate Content

Content duplication is one of the most common methods used by spammers. They simply take your website content and paste it almost everywhere. There is a huge possibility that your site will get penalized if your most of the content is copied and it will thereby lose rankings.

With the help of copyscape.com, you can know if your website has plagiarized pages on the web. You just add the body of the article or your website which you want to check and it will produce the results of whether your content is being copied and posted somewhere else without your permission or not.

6. Monitor Your Social Media Mentions

There are many spammers who will sometimes make fake accounts on social media by using your website or company name. Try to detach these profiles by pointing them as spam as soon as possible before they start following you.

With the help of tools like Mention.net, you can discover currently who is utilizing your company name.

But you will be informed soon if someone uses your name on any website or social media and at the same time, you can also come to the conclusion whether you should take any action or not.

Form an account and press “create an alert.” Give a name to your alert and append your required keywords which you want to be alerted. Multiple languages can also be used. Then press “Next step.”

Choose the sources you want Mention.net to search for, and append the domains you want to be avoided. Press “Create my alert,” and you will get notified whenever your keyword (brand name) appears on blogs, news, forums, and social media.

7. Watch Your Website Speed

In case if you will find your website has extensive loading time, check whether your server is getting thousands of requests per second or not. It is very necessary to do something to stop this or else spammers might crack down your server.

In that situation, the best tool Pingdom.com will assist you to observe your server loading time and uptime.

Make an account and enable “email alerts,” therefore you will come to realize when your site is down. Contact your hosting firm immediately if you find that your website is being attacked and ask for support as quickly as possible.

8. Don’t be a Victim of Your Own SEO Strategies

Ensure that you are not harming the rankings of your website with the use of techniques that are not allowable to Google. Certain things you should not do are given below:

● Never make a link to the websites which are penalized.

● Never purchase links for SEO and from blog networks.

● Never post a huge number of poor guest articles.

● Never create so many backlinks to your site with the use of “money keywords.” Your website name should be used by at least 60% of your anchor texts.

● Never sell links on your site without using the attribute “nofollow”.

9. Don’t Make Enemies Online

There is no specific reason existed to make enemies and never ever get in an argument with clients as you might be not knowing with whom you are dealing. Three different kinds of spammers are there and the reasons for their spamming are as follows:

● For entertainment

● For reprisal

● To get better rankings in competitive search engines

How to Combat Negative SEO against Your Website

If you find that somebody has begun a negative SEO campaign against your brand, here are the things that you can do:

1. Create a List with the Backlinks You Should Remove

Search for the links to your website that were formed recently and among them, select the bad ones in order to make a trial to detach them from your website. Tag your harmful links. Verify these without the use of a system and see which ones you want to remove that are harming your rankings.

Create this list as soon as you receive email alerts with new backlinks you are not aware of if they look like spam.

Form this list immediately as you get email notifications with new backlinks that you do not know about in case if they look like spam.

2. Try to Remove the Bad Links

After knowing the backlinks which should be removed, connect the webmaster of the site and ask them to remove your link. If you do not discover any contact page, take the help of Whois.com/Whois to discover a contact email ID.

The root domain of the website which you are attempting to contact should be added by you and then you can search for “Registrant email.”

You can contact the firm that is hosting the website and request them to detach the spam links if your link is not detached or you do not get an answer. Most of the hosting firms will definitely assist you to detach the links.

Also, verify who is hosting the site on WhoIsHostingThis.com.

3. Create a Disavow List

Use the tool called Google Disavow if you will get a manual penalty. Hence, in case if the above-mentioned techniques do not work, form a disclaimed list which you can submit later to Google Webmaster Tools.

With the help of your Monitor Backlinks.com account, you can easily form this list in it.

Conclusion

If you want your website to get success, always consider search engine impressions and website security which is very important. This is a short summary of the things that you can do to keep your website away from negative SEO attack:

Are you using any other techniques to avoid negative SEO? Have you ever come across such attacks? What other tools are you opting for this?